I just keep hitting walls when it comes to my fabric simulation! While reading this article on position based dynamics, I came across the concept of local linearization of nonlinear functions. The author mentioned that:

It is important to notice that PBD also linearizes the constraint function but individually for each constraint. The constraint equation is approximated by:

The problem, as always, is that this concept was unknown to me. 🤦♂️

We have already talked about nonlinear functions when discussing the topic of constrained functions in this article. We will continue this discussion in the context of simplifying these functions by linearizing them.

Why should I care? 🙋 Solving non-linear functions can be an expensive task, depending on its complexity. Through linearization, it is possible to partition linear parts of a non-linear function to solve them with much simpler linear equations. This is to solve them more easily and at a lower cost of resources!

⚠️ Although I described linearization of a non-linear function as some sort of magic performance booster, be sure that it makes sense for your application!

Linearization with tangential planes

To start, let’s quickly define the difference between a linear function and a nonlinear function:

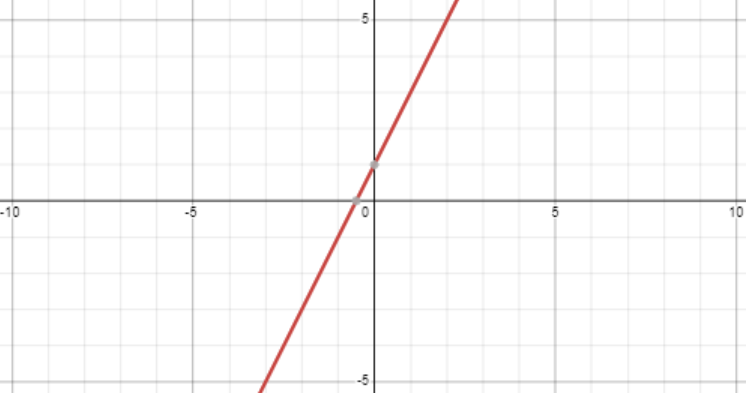

- Linear - a function that makes a straight line when drawn. For example, let’s take a look at this graph together for the function :

Note that these functions take the form:

For example:

You may also notice that the partial derivatives are constant. For example — a function like will have constant partial derivatives:

💡 Interestingly, linear functions that do not pass through the origin are technically called affine functions because linear functions must pass through the origin.

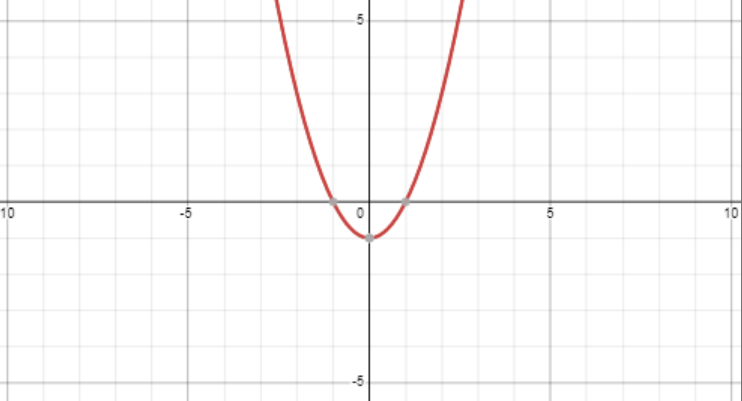

- Nonlinear - these are functions that do not take on straight lines when drawn. Here is the non-linear function :

Unlike linear functions, the partial derivatives of a non-linear function are not entirely constant. Here are the partial derivatives of a function :

Linearization of a nonlinear function can be summarized by saying that we would like to find a plane tangential to a point on a graph of a nonlinear function. This plane function serves as the linear function at that given point.

To clarify, here is the graph for the nonlinear function (blue) and a tangent plane represented by the function (purple) :

At the point , the two functions intersect. We can say that at the point the function is linearized as .

However, this linearization will get worse the further we get from the point . This is why we should say that the function is linearized locally at the point .

Since we are now more familiar the concept of linearization, we can examine it more closely. Remember that our goal is to understand this equation:

The tangent plane

In the last section, I used a function to describe a plane. However, it seems a bit too magical. 🪄

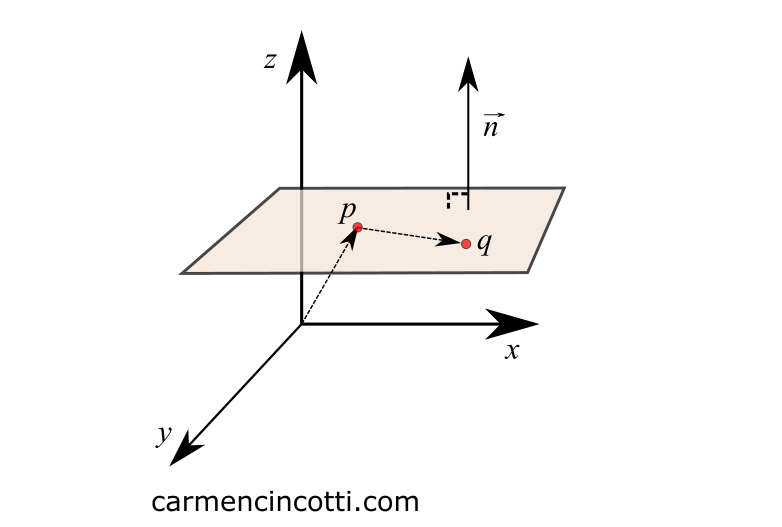

How to find the equation of a plane? To start, here is the equation of a plane in its entirety:

By using a normal vector and two points that lie on the plane, it is possible to find its equation.

where and . lies entirely on the plane.

We know that the dot product between two perpendicular vectors is equal to 0. Which leaves us with the following form .

Finally, I will rewrite this equation in a form where the vector is the intersection position of the plane and a nonlinear function:

Keep this equation in mind because we will see it in the next part!

💡 This equation is often written where as follows:

Local linearization

Since we now know the equation of a plane - we can add to this knowledge. Suppose we want to approximate the earlier function by finding its local linear approximation.

To do this, we want to find a function of a plane that is tangent to the function at point .

The inputs to the function give us the value . So we can say that the plane and the function intersect at the point .

Then, we can rewrite the equation of a plane to get rid of the variable and to separate :

How shall we continue? 🤔

An important characteristic of a tangential curve

Well, the most important thing to remember when talking about tangential curves is that a curve and its tangential counterpart must have the same slope at the point of intersection!

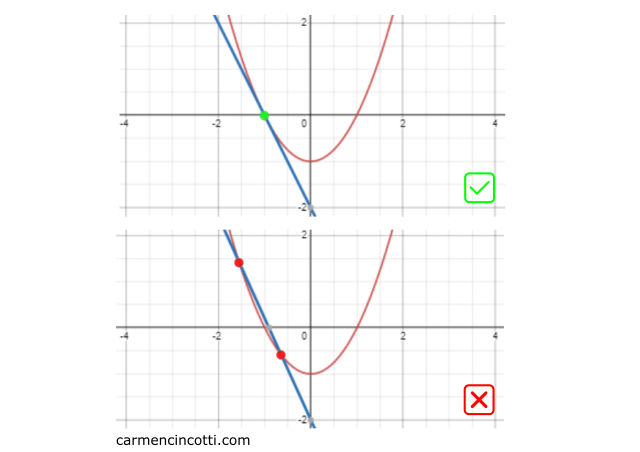

Otherwise, they cannot be tangential. Look at these two 2D graphs:

-

The top graph shows two lines that barely intersect at a single point - , which means that the blue curve is tangent to the red curve. ✅

-

The bottom graph has two intersections which means that the blue line is not tangential to the red curve. ⛔

You may be wondering:

How can we find the slope of ? 🤔

The slope is the gradient ! Recall that the gradient consists of the partial derivatives of a multivariable function.

Using the partial derivatives of - and - we can assume that:

Finally, we rewrite this equation with the knowledge that the two points intersect at point and by setting to be clear that the function is the local linear approximation of :

Function rewrite

Recall the linear function that we used is as follows:

We can rewrite our function in this form using and :

…which is the same form of the constrained function from the introduction, but where and :